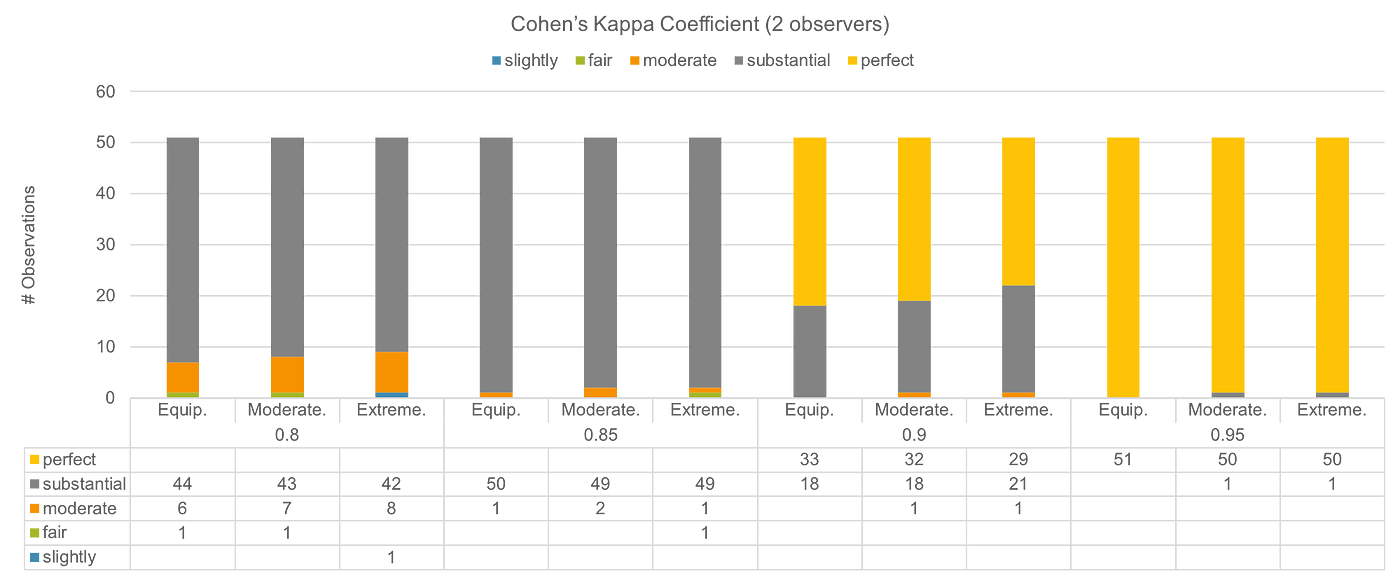

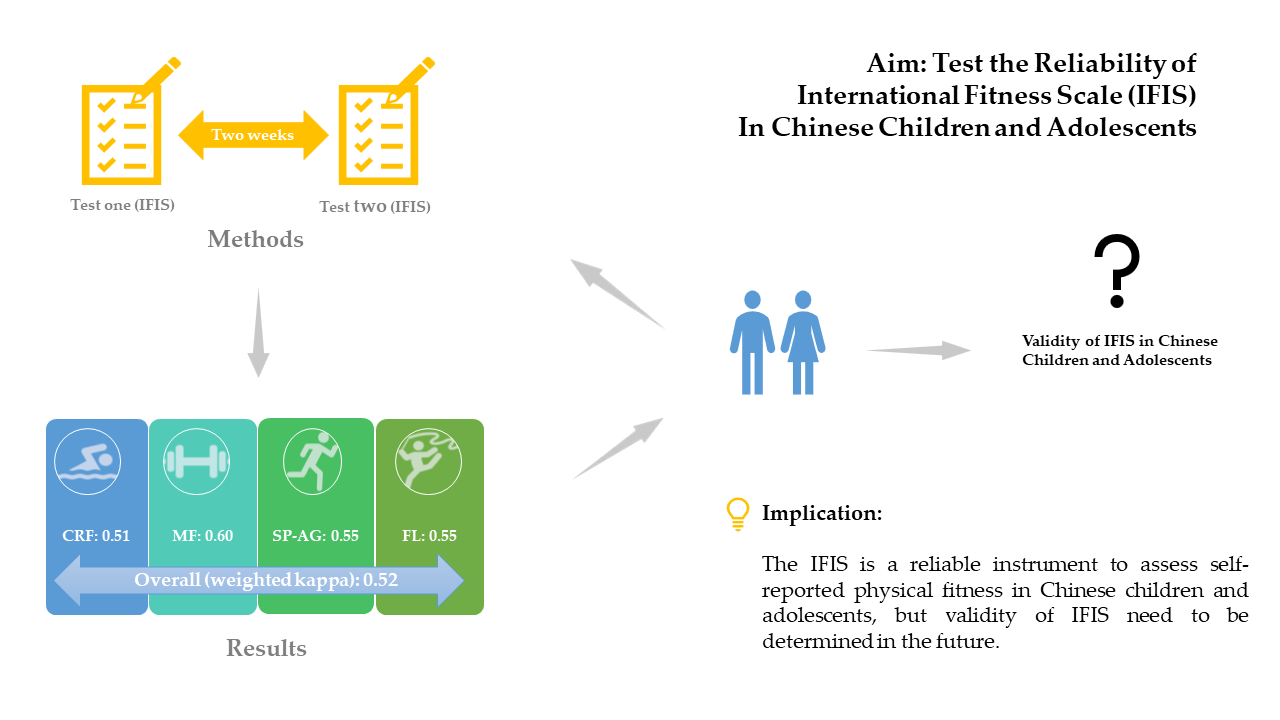

Children | Free Full-Text | Reliability of International Fitness Scale (IFIS) in Chinese Children and Adolescents

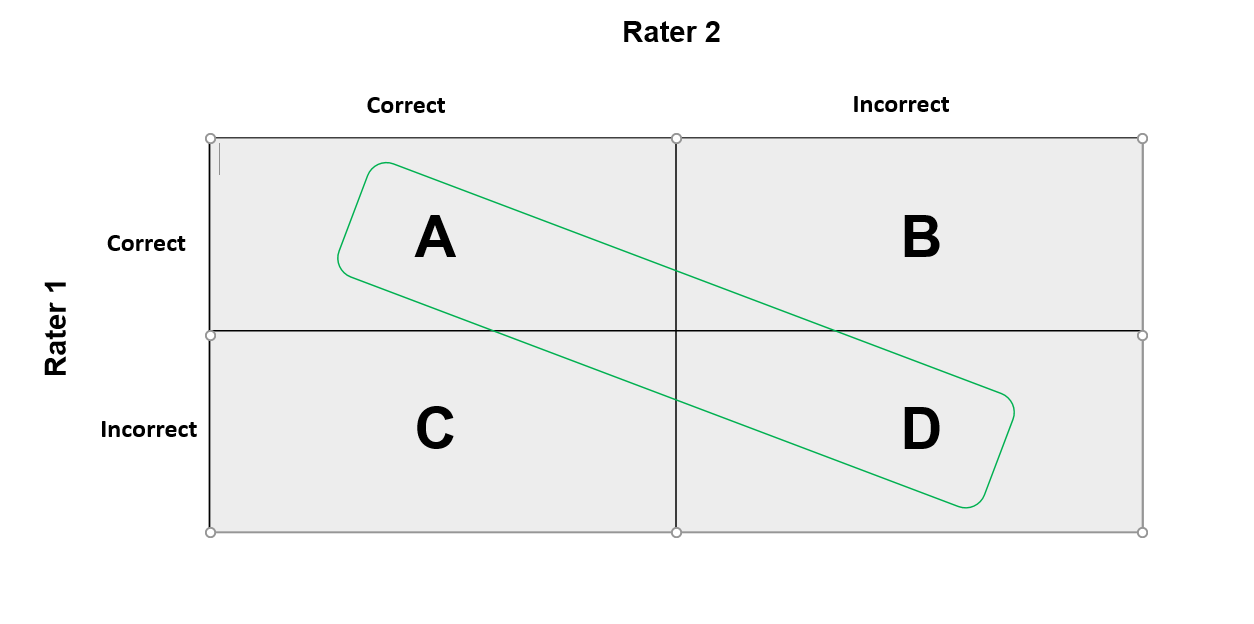

Interpretation of Kappa Values. The kappa statistic is frequently used… | by Yingting Sherry Chen | Towards Data Science

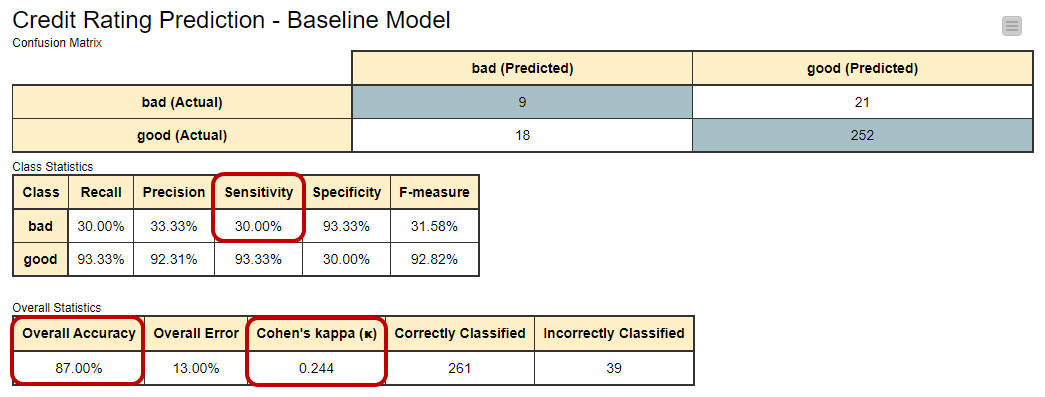

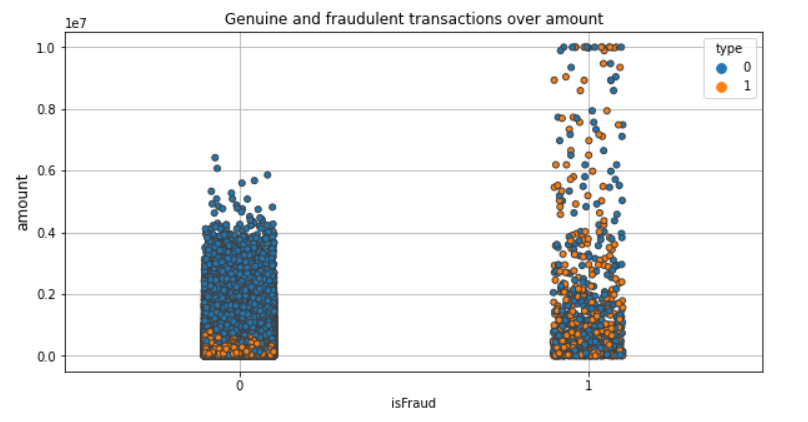

Importance of Mathews Correlation Coefficient & Cohen's Kappa for Imbalanced Classes | by Sarit Maitra | Medium

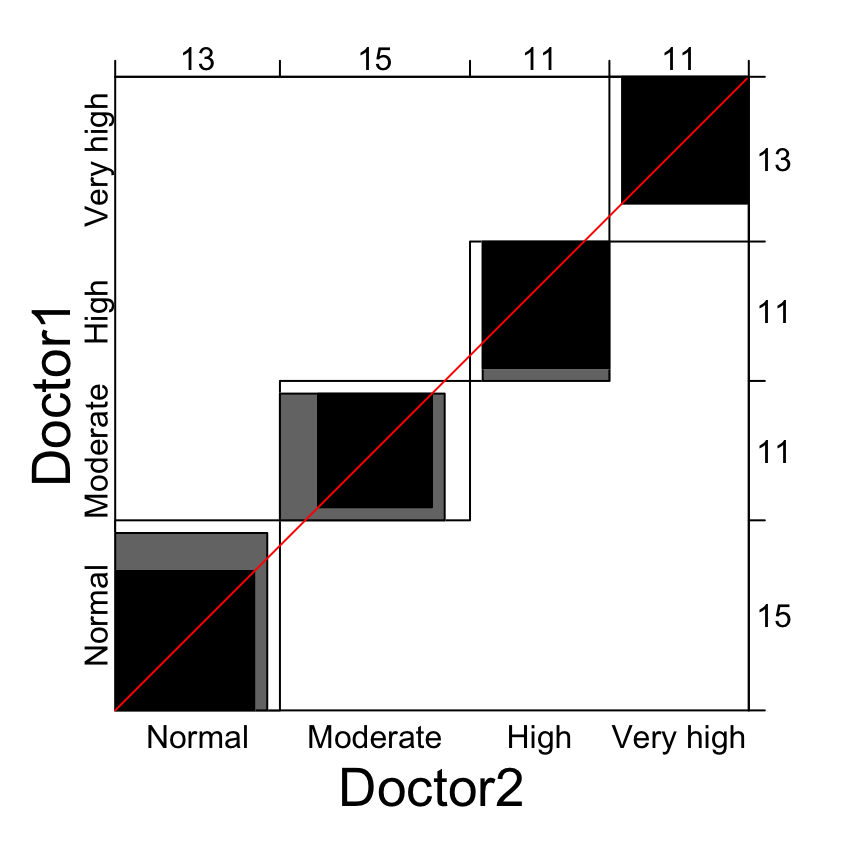

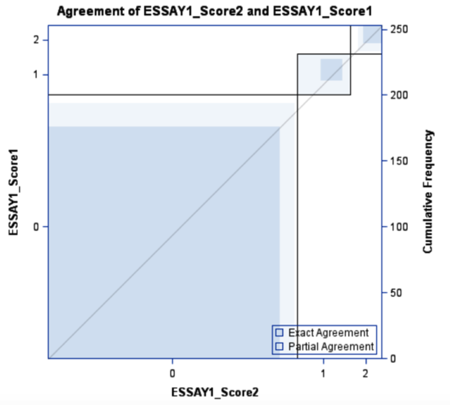

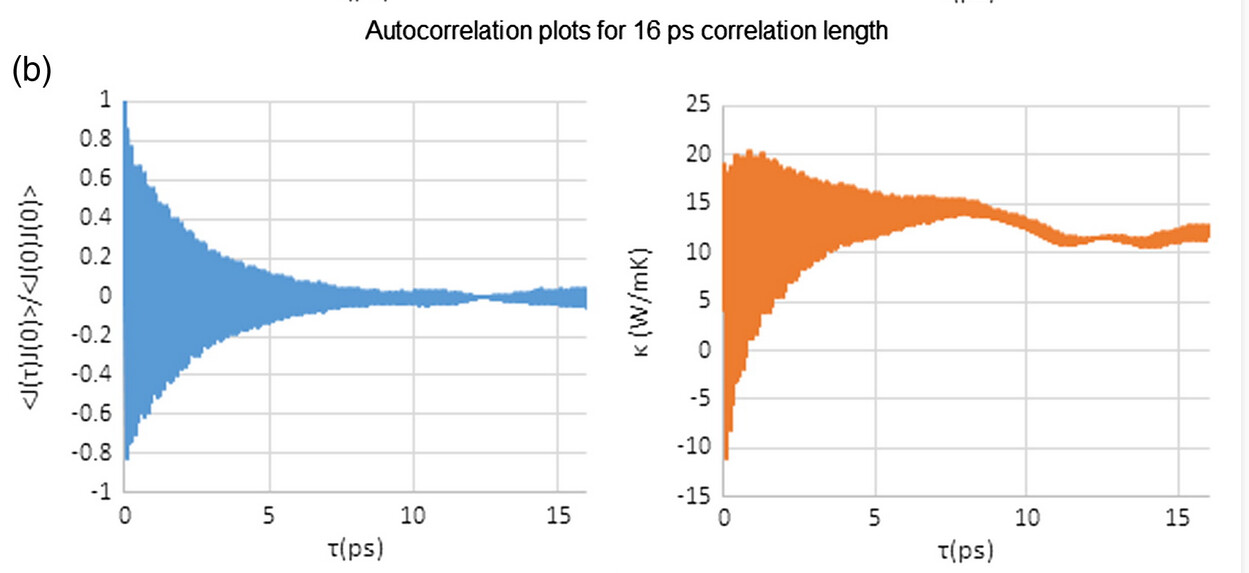

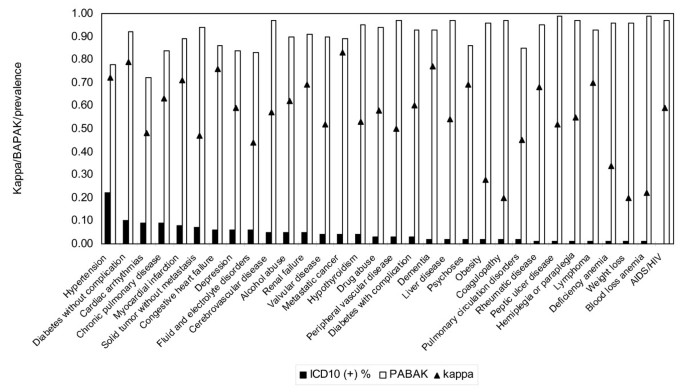

Measuring agreement of administrative data with chart data using prevalence unadjusted and adjusted kappa | BMC Medical Research Methodology | Full Text

![PDF] The Matthews Correlation Coefficient (MCC) is More Informative Than Cohen's Kappa and Brier Score in Binary Classification Assessment | Semantic Scholar PDF] The Matthews Correlation Coefficient (MCC) is More Informative Than Cohen's Kappa and Brier Score in Binary Classification Assessment | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/331013c1275d9f60a70eb3aa0518e8ec24f35713/5-Figure1-1.png)

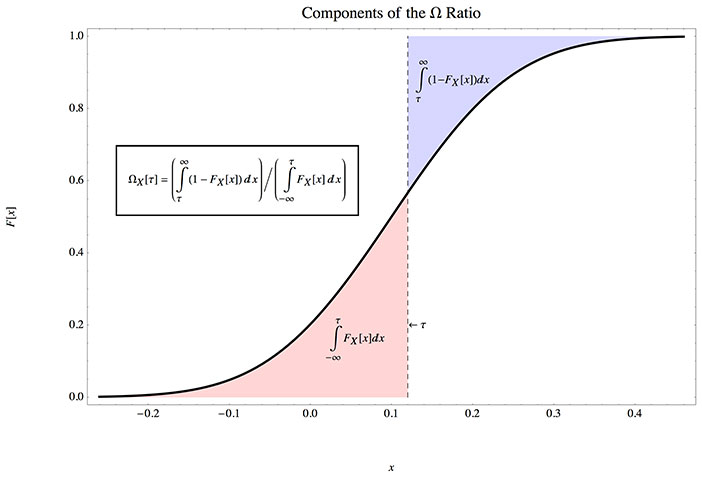

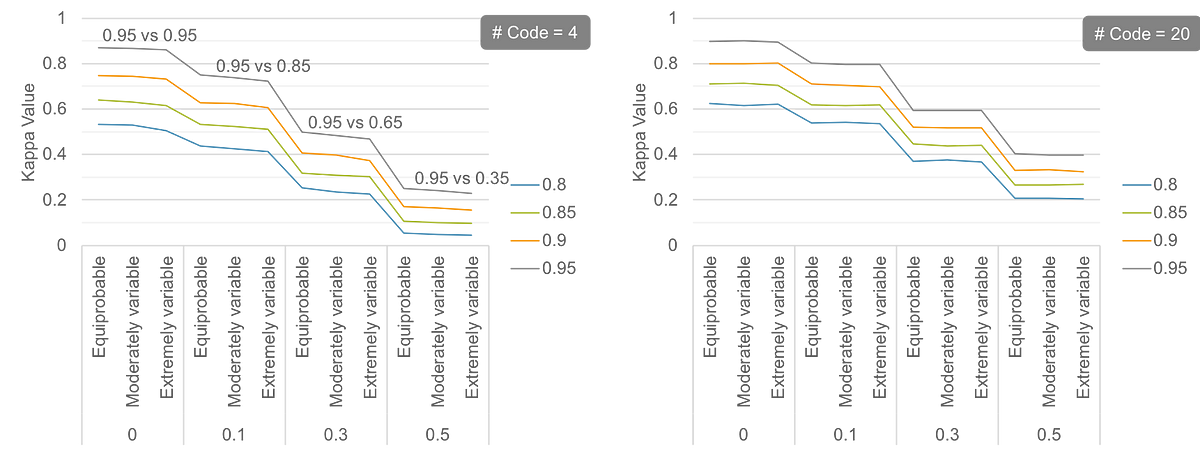

PDF] The Matthews Correlation Coefficient (MCC) is More Informative Than Cohen's Kappa and Brier Score in Binary Classification Assessment | Semantic Scholar

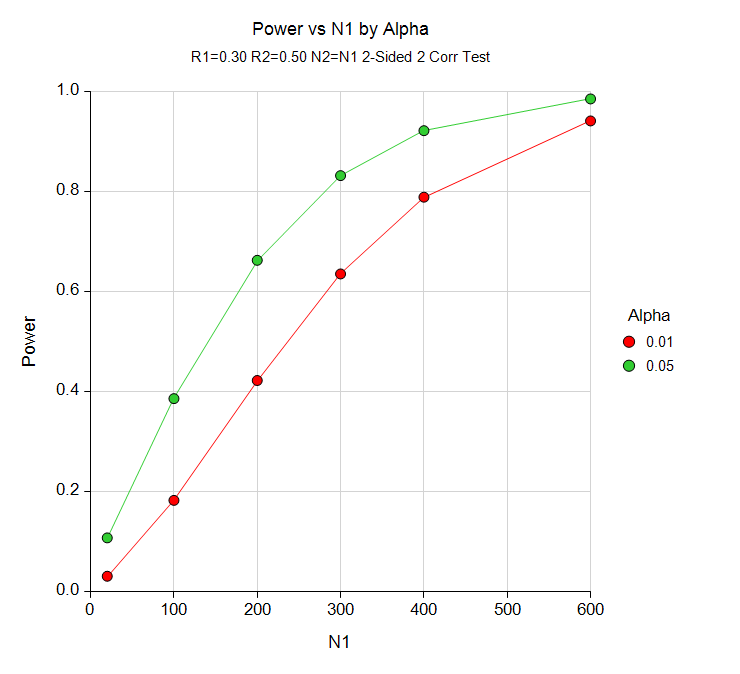

When do we use Kappa Statistic as a measure of model performance vs Accuracy - techniques - Data Science, Analytics and Big Data discussions

Importance of Mathews Correlation Coefficient & Cohen's Kappa for Imbalanced Classes | by Sarit Maitra | Medium

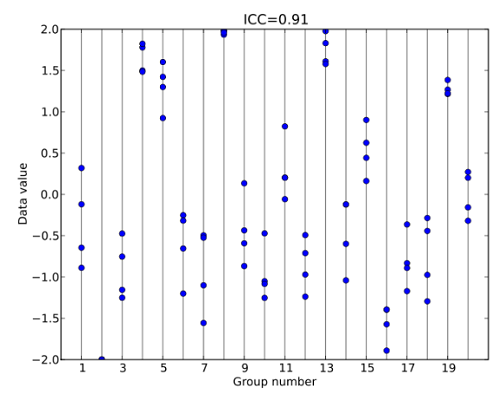

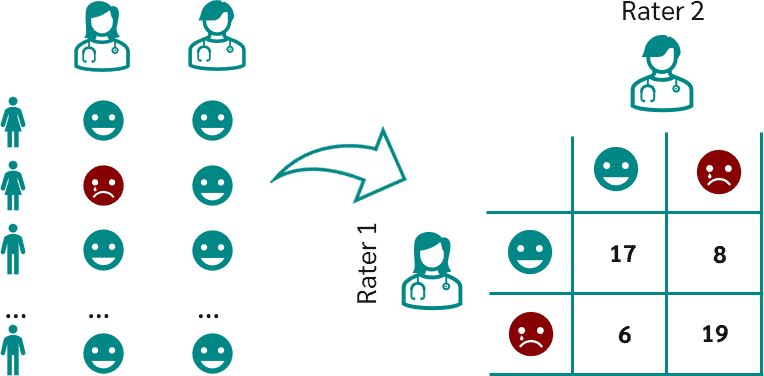

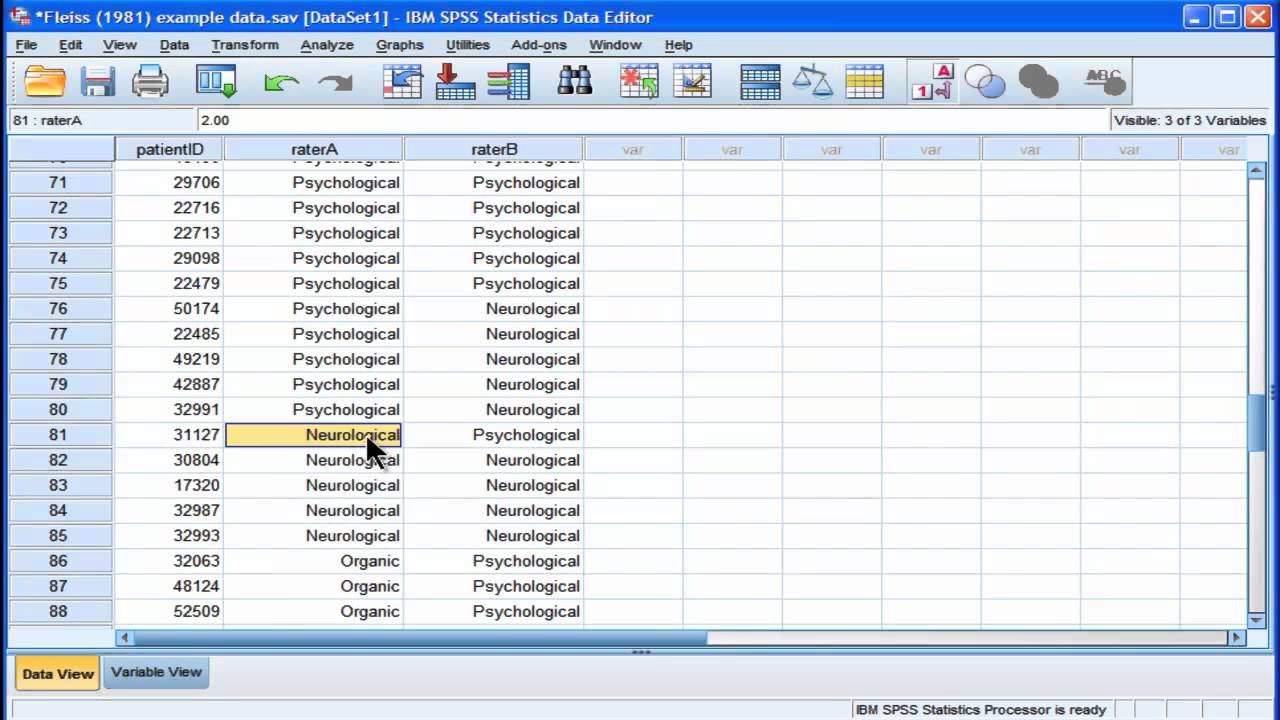

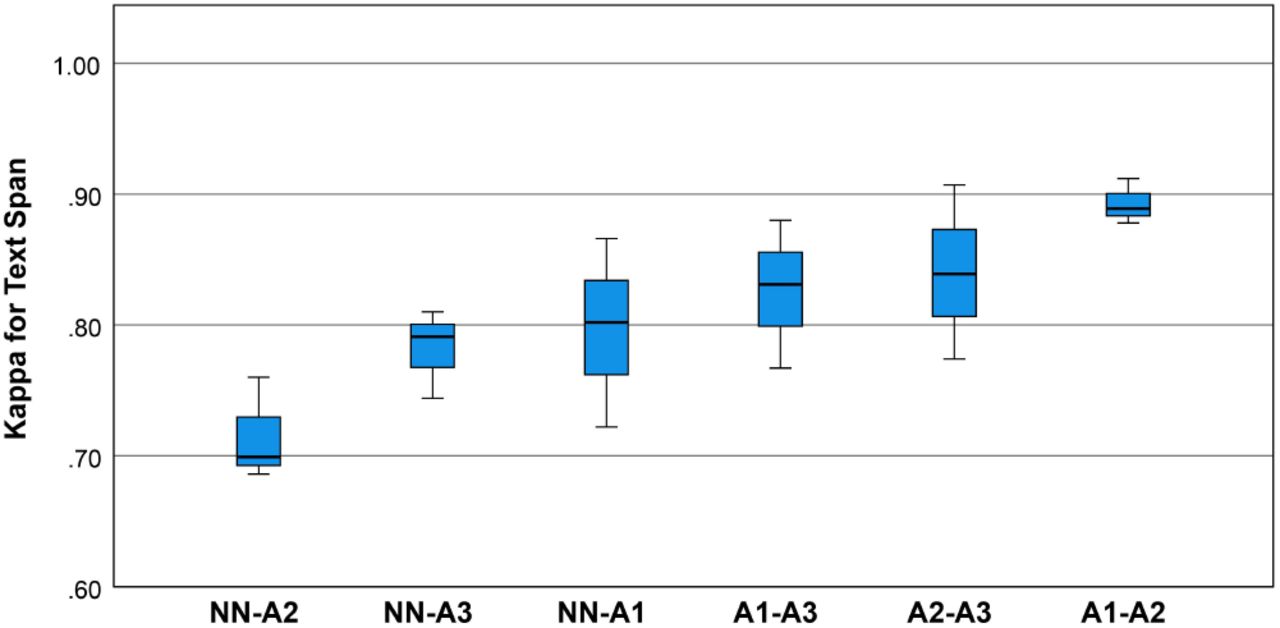

Inter-Rater Agreement for the Annotation of Neurologic Concepts in Electronic Health Records | medRxiv

Kappa Statistic is not Satisfactory for Assessing the Extent of Agreement Between Raters | Semantic Scholar